This article will explain how to perform Netnography using Social Bearing. This term ‘Netnography’ is an academic research process designed to examine conversations on the Internet. It stems from the word ‘ethnography’, which is defined as the study and systematic recording of human cultures and unsurprisingly the Internet (or net). Hence the word Net-(eth)-nography. Practitioners may use the term ‘mapping the digital landscape’ (well that’s what I called it before moving into academia).

Social Bearing is a research tool that allows you to search for keywords, conversations or people on Twitter (see figure 1). You simply type in the term you are looking for then click on search. Before doing this, I do suggest opening the advance search option first (see figure 2): your date range is limited to the last 8 days although the platform states that it can go back to 9 days. Limiting the tweets to a specific language would also help because the process is somewhat cumbersome, and you are limited to 5000 tweets if you want to download the CSV file.

[image source_type=”attachment_id” source_value=”3090″ caption=”Figure 1: The Social Bearing Platform” align=”center” icon=”zoom” size=”medium” fitMobile=”true” autoHeight=”true” quality=”100″ lightbox=”true”]

[image source_type=”attachment_id” source_value=”3089″ caption=”Figure 2: Advance Search Filters.” align=”center” icon=”zoom” size=”medium” fitMobile=”true” autoHeight=”true” quality=”100″ lightbox=”true”]

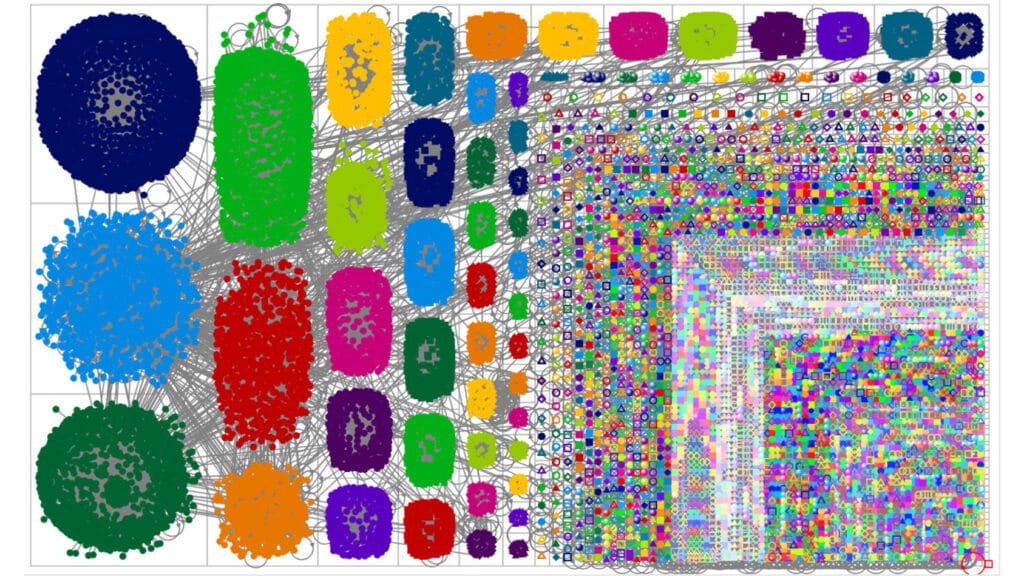

Once you what selected your term and applied certain filters (through the advance search facility) you are presented with the following screen (see figure 3): here is the results from a search on the words “digital marketing”.

[image source_type=”attachment_id” source_value=”3088″ caption=”Figure 2: Advance Search Filters.” align=”center” icon=”zoom” size=”medium” fitMobile=”true” autoHeight=”true” quality=”100″ lightbox=”true”]

Earlier, I mentioned that the process was cumbersome, this is because you only get up 100 tweets when you begin the review. You have to click on “Load More” (below the Tweet Figure) to get the next 100 tweets (or more specifically, up to 100 tweets). That said, the platform is very good at providing information for a netnographic research project. In this example (see figure 3), I had undertaken 11 ‘Load More” clicks to get the 1060 tweets. It should be noted that you can do the review without having to sign in with your own Twitter account, but you may find the information you glean is limited because the Twitter API requires authentication to reduce spamming. You can sign via your Twitter account by clicking on the icon in the top right-hand corner.

What you will then see is the number of Tweets that the system has captured, the timeframe which in this case is the last 2 hours (this would be different if your time range in the advance search was set). You then see the Reach, which is essentially the sum of the followers of each unique individual tweeting and retweeting (although when I compare the results with the downloadable CSV file, I have a difference of 4067). The Impressions are the sum of the followers for each tweet and retweet sent plus each reply (my calculations from the CSV file match). The total retweets, favourites and replies are self-explanatory. The hidden tweets would include material that has been marked by other users as sensitive: this does not always work because sensitive material will still appear on your screen. I have also found some examples where the sensitive tag has been applied but the actual tweet was innocuous.

The sentiment listing provides a view of the number of tweets that classed as Great, Good, Neutral, Bad or Terrible. I would suggest that researchers ignore this field as the algorithm used to generate the data is basic, and you would find it difficult to justify the values without understanding the details behind it. Sentiment is a problematic factor to establish because of irony, sarcasm or even the way people use English.

The tweets by type, source and linked domains are interesting from a descriptive statistic’s point of view but are limited in any ‘richness of information’. The tweets over time will relate to your local time. It is a pity that the platform is unable to provide the exact time the tweet was posted in your location. The Hashtag and Word clouds are very useful, I use them to identify additional terms to add to the research.

You can also see tweet by country (see figure 4), but you have to click on the map to activate it. Just like Google Analytics, the deeper the green is an indication of increased activity. Use the map (and for that matter Geo Tags) with some caution as it isn’t a requirement to include a precise location, in my experience, I find as many tweets with no location as I do with a location: some of these locations can be words like ‘Middle Earth’.

[image source_type=”attachment_id” source_value=”3087″ caption=”Figure 4: Social Bearing Map.” align=”center” icon=”zoom” size=”medium” fitMobile=”true” autoHeight=”true” quality=”100″ lightbox=”true”]

You’ll then see a list of all the tweet, retweets and relies that have been sent (see figure 5). This is great for a quick glance, but if you are serious about undertaking some netnographic research, then you do have to download the data as a CSV file. Note, the pop-up restrictions: you have to allow your computer to accept pop-ups before you can get the data. I suggest using Chrome, I find this the easiest platform to use when trying to download the information. Unfortunately, you will need to apply a two-step process: first load it into Google Sheets then download to an Excel spreadsheet. This is because some of the icons and emojies are lost if you go straight to Excel.

[image source_type=”attachment_id” source_value=”3086″ caption=”Figure 5: Social Bearing Tweet Review.” align=”center” icon=”zoom” size=”medium” fitMobile=”true” autoHeight=”true” quality=”100″ lightbox=”true”]

The left side of the platform provides you with filter options for the overview (see figure 6). However, as mentioned I prefer to download, manipulate and analyse the data in Microsoft Excel and Access.

[image source_type=”attachment_id” source_value=”3085″ caption=”Figure 6: Filters” align=”center” icon=”zoom” size=”medium” fitMobile=”true” autoHeight=”true” quality=”100″ lightbox=”true”]

That concludes this brief overview of Social Bearing, I currently have a Journal paper on review that uses Social Bearing. Once its published I will give you some more details on how I completed the detail analysis.

Alan Shaw

Latest posts by Alan Shaw (see all)

- What is social listening and why it is an important tool for researchers? - July 31, 2021

- COVID-19 and Remote Learning: Experiences of parents supporting children with SEND during the pandemic. - June 30, 2021

- Using Netnography To Evaluate The Launch And Collapse Of The European Super League - April 21, 2021

- Developing Semi-Structured Interview Questions: An Inductive Approach. - April 9, 2020

- Developing Semi-Structured Interview Questions: A Deductive Approach - April 9, 2020